23 CIO Events to Attend in 2026

23 CIO Events to Attend in 2026

Why 60% of AI Projects Will Fail This Year (& How CIOs Can Beat the Odds)

Enterprise-grade AI adoption is no longer optional; it's essential for gaining a competitive advantage. But, before launching new AI projects, let's look at the three core reasons most AI projects struggle to deliver results and how CIOs can overcome these challenges.

Welcome to the “CIO’s Guide to AI” article series. This is the first post in the series. Don’t miss our next articles on how to launch successful AI projects and the top AI use cases.

Gartner predicts that companies will abandon 60% of AI projects by the end of 2026. If you’re a CIO reading this, that statistic should make you pause. After the initial wave of AI adoption in 2024 and the continued maturity of AI technologies in 2025, why are AI projects still failing at such alarming rates?

The answer isn’t what most executives expect. It’s not about choosing the wrong model or lacking technical expertise. The root cause of AI projects failing to advance past pilots lies in something far more fundamental: fractured knowledge foundations. Organizations are attempting to deploy these projects on knowledge infrastructure and content that were never designed, governed, or prepared for AI.

As AI spending surges 280% year-over-year (from 2024 to 2025), the pressure on CIOs has never been greater. Enterprise-grade AI adoption is no longer optional. It’s a survival strategy, as early AI adopters will benefit from a compounding advantage that latecomers will be unable to reproduce. Meanwhile, rushing into AI without addressing the underlying knowledge crisis is a recipe for failure. To help CIOs navigate these challenges, we explain the three core reasons AI projects fail. Then we examine how CIOs should change their approach.

The Real Reason AI Projects Are Failing: A Knowledge Crisis

Three distinct issues account for over 80% of all AI projects struggling to deliver results. That figure climbs to 95% for Generative AI pilots. Let’s look at how organizations manage, structure, and deploy their knowledge and how it relates to AI success.

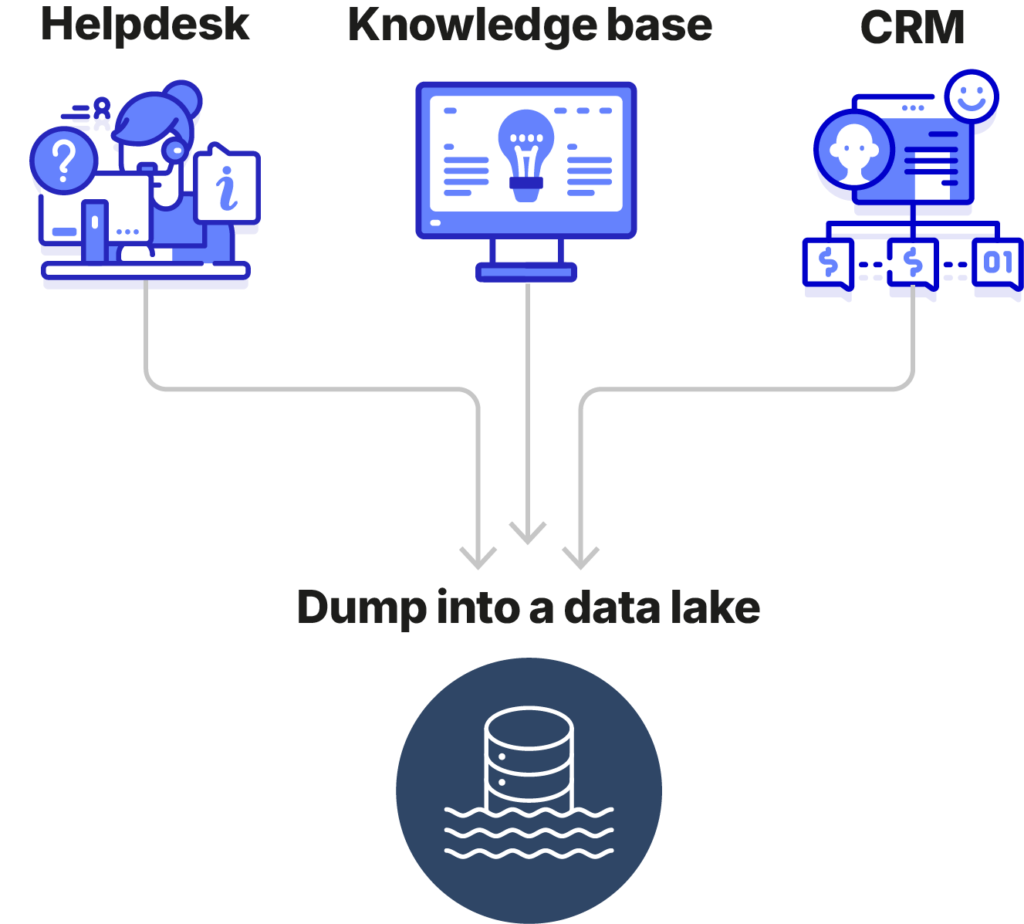

1. Data is Trapped in Silos and Inaccessible

Most enterprise technology stacks were assembled over years, with each system solving a specific departmental need. These legacy systems typically weren’t built for AI integration. The result is data and content scattered across incompatible, inaccessible systems. CRMs, knowledge bases, helpdesks, CMSs, ERPs, and countless other repositories operate in isolation. Each holds a piece of organizational knowledge. Yet, without connecting the platforms, AI struggles to gain an accurate understanding of information.

Some organizations have tried to resolve these challenges by developing data lakes. However, without robust governance, real-time synchronization, or high-performance infrastructure, their data lakes often degrade into data swamps.

The consequence is clear: businesses face challenges in accessing and leveraging their own information efficiently. If an AI system cannot reliably locate the right information when it needs it, it cannot generate actionable insights or business value. Data volume and AI tool selection aren’t the issues. The problem is the lack of a unified, well-structured knowledge foundation.

2. Most Content Isn’t AI-Ready

Even if information exists, the content format and structure often make it difficult for AI to use it effectively. According to IDC, IT and business leaders cite inadequate data quality as the #1 factor behind AI projects falling short.

Here, content refers to natural language text. This is less structured and machine-readable than databases or JSON.

Project success hinges on accurate and reliable AI outputs. Yet, the quality of AI outputs is only as good as the quality of the content feeding the model.

Much organizational content was written by people, for people. It was structured around how humans navigate and read, not how machines process and interpret. Take a PDF, for example. Complex layouts introduce ambiguity, creating a potential point of failure should AI try to extract value from it. Instead, the AI is likely to produce outputs that are confusing or irrelevant. While LLMs are built to handle and interpret natural language, human-designed content remains difficult for machines to ingest and comprehend.

Other content types also typically lack sufficient enrichment, such as proper tagging, metadata, or indexing. When information is missing context, AI systems cannot fully understand its relevance, leading to inaccurate retrieval, weaker personalization, and often, hallucinations.

68% of organizations report more than half their files contain at least one issue that could negatively impact GenAI initiatives.

Source: Shelf AI

3. Companies Have Inadequate Security and Controls

The third dimension preventing AI project success is security. When AI systems access an organization’s data to generate responses or take actions, the boundaries around what they can and should access become critical. AI projects that lack controlled operational boundaries often succumb to security vulnerabilities. The risks fall into three broad categories:

- Data privacy risks: This is the possibility of sensitive or personally identifiable information being surfaced to users who shouldn’t see it.

- Data security risks: Data can leak when the access controls that govern an existing repository are not faithfully applied to the AI layer sitting on top of it. This occurs when access controls are improperly configured, the transfer fails, or attacks successfully exploit vulnerabilities.

- Compliance risks: If an AI system processes or presents regulated data without adequate transparency or audit trails, the organization may find itself in breach of requirements, even if it was otherwise meeting regulations.

These security challenges require proactive strategies before launching AI projects, not reactive fixes.

What CIOs Need to Change

Overcoming these challenges doesn’t require scrapping existing systems or rebuilding IT from the ground up. It requires a deliberate shift in how organizations think about the relationship between AI and the content it depends on.

That means treating knowledge readiness as a project requirement, not an afterthought. It means auditing content so it is structured, tagged, and governed in a way that an AI system can use. And it means ensuring that boundaries around sensitive data are replicated, not bypassed, when AI enters the workflow.

CIOs who get this right will find that their AI projects move to production with far less friction. The margin for hesitation is shrinking. Organizations that stick to their pre-AI roadmaps or limit themselves to trial-and-error risk being surpassed by agile competitors.

Beating the Odds with a Strong Knowledge Foundation

Sixty percent of AI projects failing is not an anomaly. It is a signal. The organizations that will overcome those odds are not necessarily the ones with the biggest budgets or the most sophisticated models. They are the ones who take the time to get their knowledge infrastructure right first, before they build AI on top of it.

CIOs must prioritize AI-fit content before project deployment. This means:

- Breaking down data silos and creating unified, well-governed knowledge foundations.

- Transforming unstructured content into AI-ready knowledge with proper structure, metadata, and taxonomies.

- Implementing security-first approaches with proper access controls and compliance frameworks.

The AI era represents the reinvention of IT, where technology strategy and business strategy finally converge. The time to act is now. Be part of the 40%. Continue the journey in our series A CIO’s Guide to AI with the article “How to Launch Successful AI Projects“.

23 CIO Events to Attend in 2026

23 CIO Events to Attend in 2026